Collecting RGB-D Datasets on LiDAR Enabled iOS devices

March 10, 2021Update 17th of March 2021: Updated links, expanded some sections.

The iPhone 12 Pro and iPad Pro devices now ship with LiDAR sensors built-in. While on-device AR applications are fun, you hit pretty serious limitations very quickly if you are looking to do heavy processing of the collected data. What I wanted to do, is export the LiDAR and video data for labeling and use the labeled data to train machine learning models. Another application would be 3d reconstruction/dense mapping.

To do this, I built Stray Scanner.

It allows you to record video with corresponding depth maps and visual inertial odometry estimated by ARKit. The odometry information contains the rotation and translation of the camera, relative to a global coordinate frame.

Below is a demonstration of the app and a small glimpse of what you can do with this sort of data.

Collecting Data

Datasets are recorded using a camera like interface.

We transfer the collected data to a desktop computer, where we can play around with it. This can be done by connecting the phone to the computer and dragging them using Finder.

Visualizing the Data

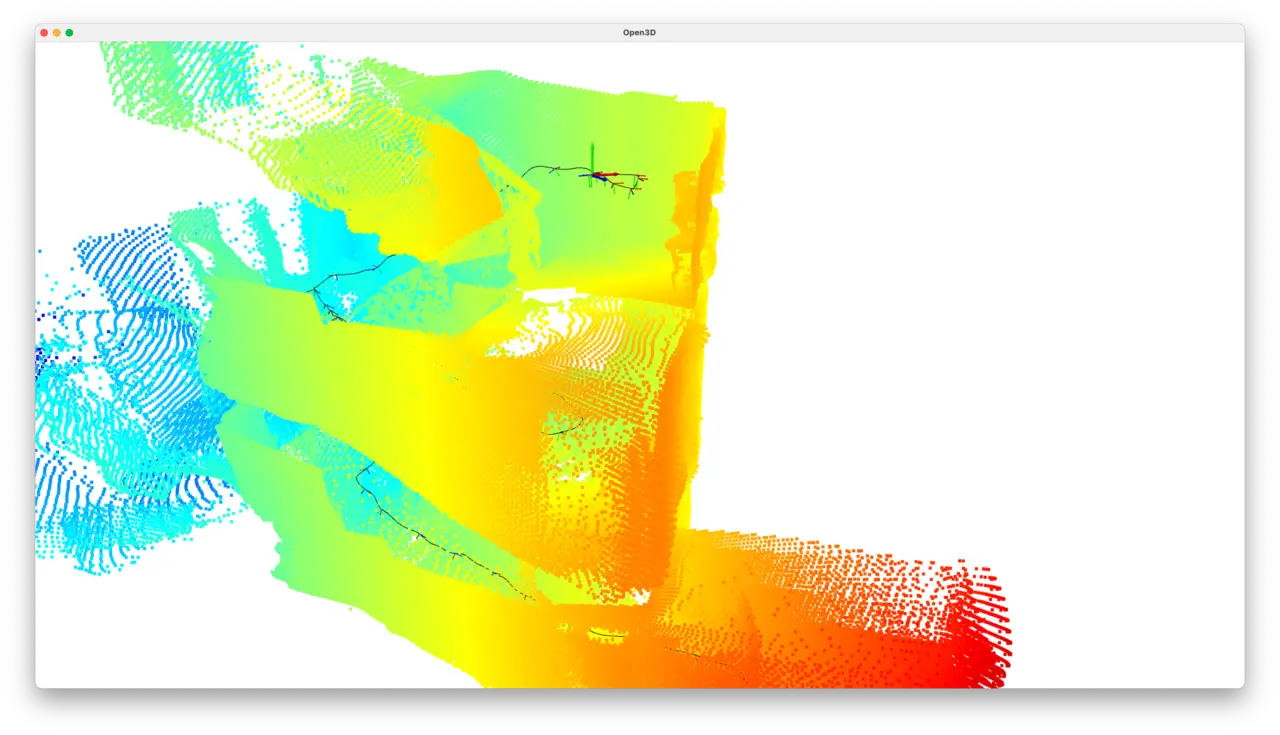

I collected a short dataset while walking down a flight of stairs. Here is what the depth capture looks like for this recording.

If you are wondering, why things are so small and blurry, the reason is that the depth maps captured by iPhones and iPads have a resolution of 192x256.

I built a script using Python and Open3D to further visualize the data. The script, along with some other tools and information can be found here.

python stray_visualize.py --frames --trajectory --point-clouds shows the trajectory the camera took as well as point clouds that are created from each depth map.

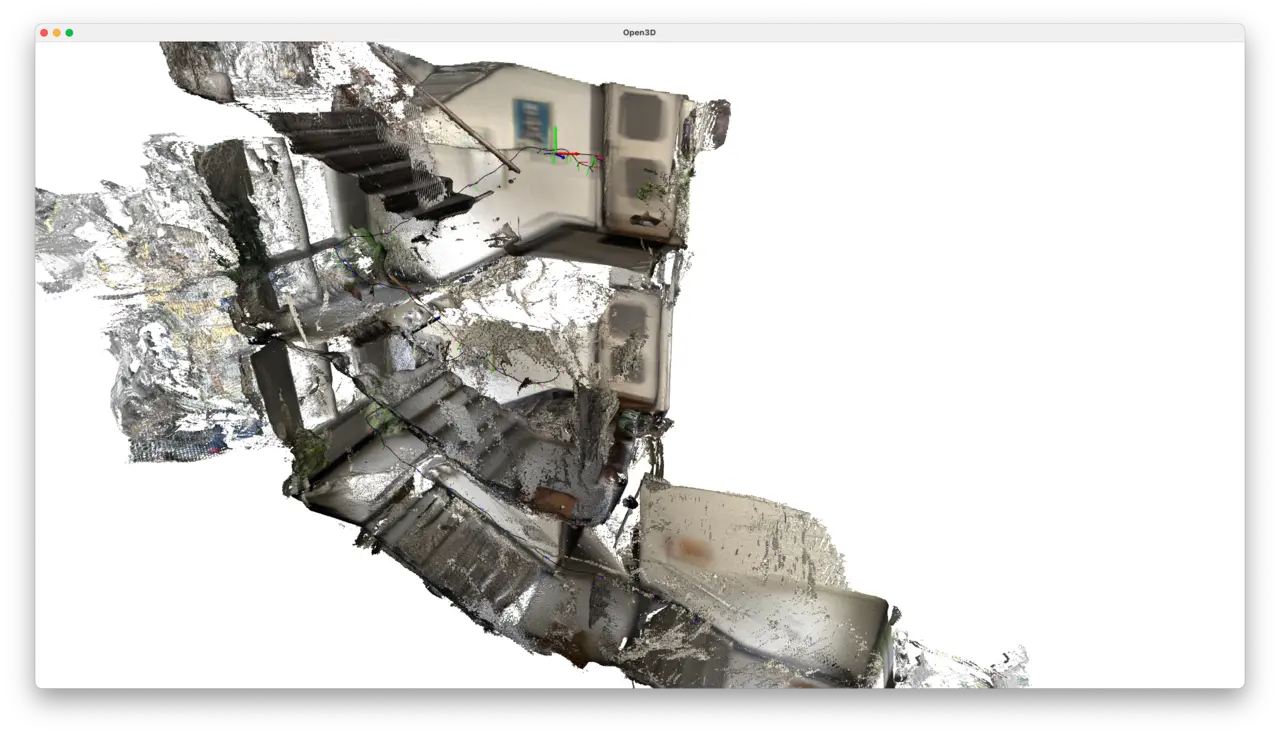

Reconstructing a 3D Mesh

I ran the data through the Open3D RGB-D integration pipeline.

This can be done using the same script as above with the command python stray_visualizer.py --frames --trajectory --integrate.

I used a voxel size of 1.5 centimeters for this scene.

In this example, I naively perform RGB-D integration which assumes the visual-inertial odometry is correct. This isn’t the case and it will drift over time. But it is good enough for a quick visualization. For a proper 3d reconstruction, going through extra steps such as pose graph optimization would improve results drastically.

Concluding

I’ve published the app on the App Store, where you can download it and use it in any of the projects you dream up.

What’s best, the app doesn’t contain any tracking or analytics software. All the datasets you record are stored on your device. They are only moved out when you do so yourself.

If you find any bugs, you can report them in the issues section of the visualizer repository.